Compute PARALLELPERIOD without using PARALLELPERIOD in DAX

- Antriksh Sharma

- May 1, 2021

- 3 min read

Updated: May 2, 2021

PARALLELPERIOD is a DAX function that allows you to shift MONTH, QUARTER, or YEAR based on the the dates visible in the filter context, however performance can be worse when using time intelligence function such as PARALLELPERIOD itself.

In this article I will show you how you can compute PARALLELPERIOD calculation without using PARALLELPERIOD but before that let's see some calculations that you can achieve with PARALLELPERIOD

Below are some examples of calculations using PARALLELPERIOD.

For the sake of readability and ease of writing I am only going to use Previous Month calculation.

I have limited the results to 2007 by editing TREATAS

Now let's identify the performance of the Previous Month code using Performance Analyzer and DAX Studio, Please note that the data set hosted on DAX.Do only contains 100K rows, but to be able to see any significance in the optimization I am going to use the same data set but with 12.5 Million rows.

To get the performance data we need to get the extract of the performance analyzer

This is how the code generated by Power BI looks like:

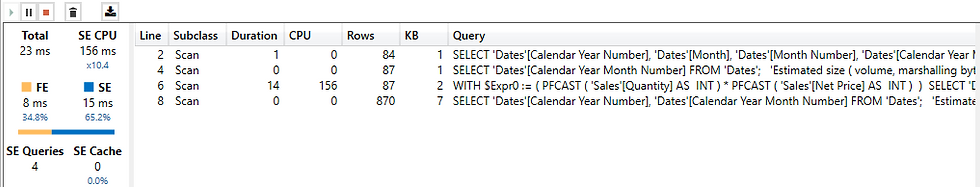

Here are the queries generated by the PARALLELPERIOD function:

For a simple Previous Month calculation it appears that PARALLELPERIOD is pretty performance intensive, because 53ms on 12.5 million rows is a lot.

By now if you are thinking of using PREVIOUSMONTH function then that also won't help too much as the queries generated by PREVIOUSMONTH are 6, so it appears we need to think in terms of vanilla DAX and let's see what we can do.

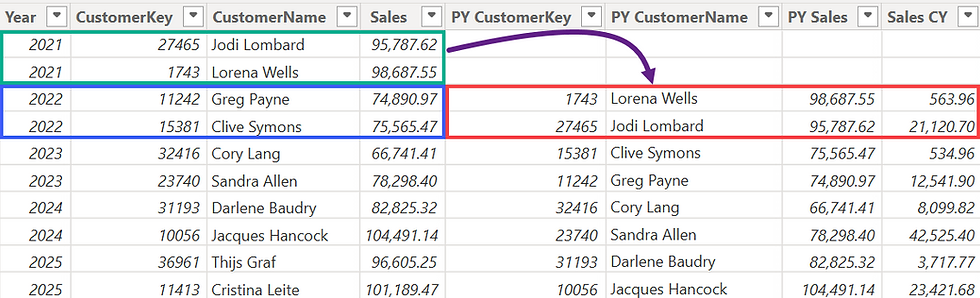

The first thing to do is to create a new measure, I will name it as PARALLELPERIOD Optimized, and it will have variables and the first one will basically retrieve the MAX Calendar Year Month Number from the current filter context, the reason for using the Calendar Year Month Number is that it will help in traversing back and froward easily.

The next variable will compute the immediate previous Calendar Year Month Number that is less than the MaxMonthNumber variable:

Now we will use this variable to calculate the sales amount for the previous month:

This is what this measure written so far returns, if you pay attention you can see that the subtotals are incorrect and the grand totals are missing.

to compute correct subtotals and grandtotals we need to initiate an iteration using SUMX so that we are iterating on the correct granularity.

So before the first variable all I need to do is add SUMX ( VALUES ...

Now we don't need to calculate MAX and we let the row context supply the value of Dates[Calendar Year Month Number] row by row

This is how the complete version of the code looks like:

Result with correct subtotals and grand totals:

Now it is time to test the performance of this code:

The performance has improved slightly and the total numbers of rows reported are also fewer as compared to the earlier test where each query returned around 2556 even though this is just an approximate number and the real numbers are actually reported in the Physical query plan ( In this case the total rows reported are much less than the ones iterated by the engine )

The approximate number of rows returned by the original measure using PARALLELPERIOD

Now let's move on and see how we can further optimize the code and bring down the total execution time even further.

The first optimization that came to my mind is that instead of using CALCULATE to compute the PrevMonthNumber what if I use MAXX and FILTER combination like the following:

To be honest I didn't expect to see any performance improvement or query reduction using the above construct but the results are shocking:

The new change has reduced the total Storage Engine queries to 6

Following the same ideology I thought if I can make any modifications to the Result variable and get any performance benefit and this is the change I made tot that variable:

In the previous variable I have pre computed everything using ADDCOLUMNS/VALUES construct and then used FILTER to get only the previous month and at last I used SUMX to compute the SUM of Total Sales, let's see the performance benefit of this variable:

This is how the complete version of the optimized code looks like:

The result is surprising as this version of the code runs in less than half the time of the original code:

And if I run the code without clearing the cache then the code makes excellent use of cache and runs in 1/5th of the total time of the original query:

Before wrapping up there is one other extreme performance optimization that we can try but that involves setting up Aggregations:

The performance with aggregations is the best one yet, without clearing the cache the performance hover around 10ms which is only achievable by not clearing the cache in non-aggregation models:

Comments