How many queries can Power BI engines cache?

- Antriksh Sharma

- Sep 6, 2022

- 4 min read

Updated: Oct 31, 2022

If you work with Power BI and SSAS Tabular one thing to know is that there are 2 engines in Analysis Services i.e. Formula & Storage Engine that manage everything inside your data model. These 2 engines work together to plan everything that happens internally in your model.

Storage Engine stores the data in a compressed format on RAM and Formula Engine translates the DAX code into something that Storage Engine can understand, when the Table is in import mode VertiPaq store is queried using xmSQL that is very similar to SQL and when a table is in DirectQuery then it sends queries to relational database using SQL.

When you write a DAX measure/column/calculated table, the Formula Engine converts your code into Logical and Physical Query plans, and to retrieve the data from the Storage Engine, it executes xmSQL/SQL queries, your CPU scans the Storage Engine segment by segment and retrieves data caches and joins them which are then later used by Formula Engine to answer the orignal query.

For every xmSQL/SQL query there is a data cache that is stored in a temporary area in memory, now there is a limit on how many data caches can be stored and in this blog let's take a look at that number and its side effects.

The model that we are going to use contains table from Contoso database, we are interested in Dates, Customer and Sales Table.

And we are going to use DAX Studio to investigate the queries, with Query Plan and Server Timings on

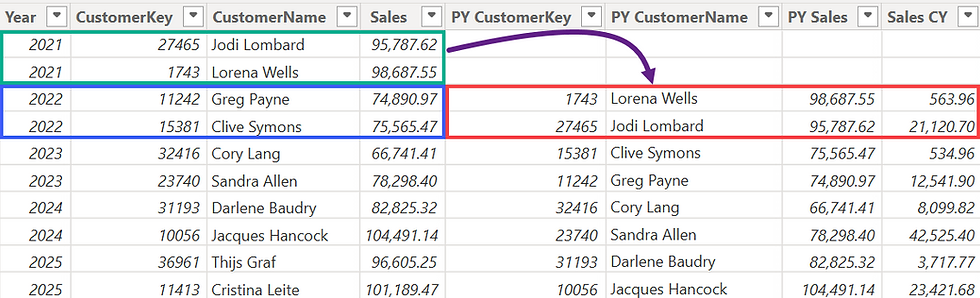

So we begin with a simple example first of computing customers in the Previous Year for each year.

As you can see in the current year we get the count of previous year. Now let's take a look at the queries that are generated

In the first query (Line = 4) Storage Engine scans and basically gets count of Sales[CustomerKey] for each year.

SELECT

'Dates'[Calendar Year Number],

DCOUNT ( 'Sales'[CustomerKey] )

FROM 'Sales'

LEFT OUTER JOIN 'Dates' ON 'Sales'[Order Date]='Dates'[Date];

On Line 6 it gets all the values of Date column from Date table

SELECT

'Dates'[Date]

FROM 'Dates';

On Line 8 it gets existing combination of Dates[Date] and Dates[Calendar Year number]

SELECT

'Dates'[Date], 'Dates'[Calendar Year Number]

FROM 'Dates';

From Line 12 onwards it starts computing the value of previous year because of the SAMEPERIODLASTYEAR that we have in the DAX code.

SELECT

DCOUNT ( 'Sales'[CustomerKey] )

FROM 'Sales'

LEFT OUTER JOIN 'Dates' ON 'Sales'[Order Date]='Dates'[Date]

WHERE

'Dates'[Date] IN ( 39225.000000, 39266.000000, 39307.000000, 40223.000000, 40264.000000, 40305.000000, 39102.000000, 39143.000000, 39184.000000, 39061.000000..[2191 total values, not all displayed] ) VAND

'Dates'[Date] IN ( 39102.000000, 39143.000000, 39184.000000, 39225.000000, 39266.000000, 39307.000000, 39116.000000, 39157.000000, 39198.000000, 39239.000000..[365 total values, not all displayed] ) ;

How do I know it is computing previous year calculation? pay attention to the dates highlighted in Blue and the number in Orange, the first list it has all the dates of Dates table and in the second one it has a list of dates in 2007, and we can confirm that with Excel:

And similarly the same computation happens in all of the other queries but some of them return nothing because the second list is checking for Year = 2005, 2006, and 2010 and the data model doesn't have any row in Sales table for these years, hence the Rows column of DAX Studio also shows a 0.

Now as you can see in previous screenshot that there are 9 Storage Engine queries that were executed to prepare the result, these queries return a Data Cache that is then consumed by the Formula Engine and are stored in the CPU L2 and L3 cache, and next time when a similar query is executed by the client tool the engine will know that instead of spending time in re-executing everything it can simply answer the request using the data cache.

But here is where things become a bit complex, once we know that data cache is cached we might think that it is going to happen in every scenario and no matter what the performance will be great, but that's not the case. There is a physical limitation on how many data caches are cached and to demonstrate that I am going to first re-run this existing query by unchecking the clear cache option of DAX Studio.

This time you can see all of the 8 queries are cached and are shown in SE Cache section.

So far we are computing result at the year level which is very simple, now we are going to move to higher granularity of Date and see how many queries are executed.

This time the engine executes 2,194 queries, one for each date in the Date table.

The important thing here is that if I rerun this code what happens?

We are still executing same number of Storage Engine queries and the code is extremely slow in the second and each subsequent execution.

So to prove my point that there is a limitation what I am going to do is apply a filter in SUMMARIZECOLUMNS so that we only compute the calculation for a few months of few years.

As you can see I am only returning result for the dates that are BETWEEN 2008-01-01 and 2009-04-30 and this time there are only 488 queries executed.

Now I am not going to clear the cache and if I run it once again all of the queries are cached:

If I change 200904 to 200905 and execute the code twice the queries are not cached:

So that mean the number of queries that can be cached is definitely lower than 518.

So I did some investigation and found out that the max queries it can cache is 513 and from 514 queries onwards the engine discards everything in the cache memory and re-executes the every query.

513 works:

514 doesn't 😭

Notice the massive time difference between the one with 513 and 514 queries scenario? It is night and day in terms of computers.

Alright, that was all I had for today, now hopefully I have give some valuable insights to you so that when you are optimizing your DAX queries you will know why your code is slow and you can explain to others as well the reasoning behind it.

Comments